Stop Burning Compute.

Heavyweight APIs like GPT-4 are expensive and slow for simple tasks. We help you distill down to localized, small language models that are faster, keep your data entirely private, and cost pennies on the dollar.

Model Distillation

Lean, specialized models engineered for one specific job, without the overhead of heavy logic walls.

Latency Shield

Zero external API dependencies so you receive split-second inference, from 5s down to 200ms.

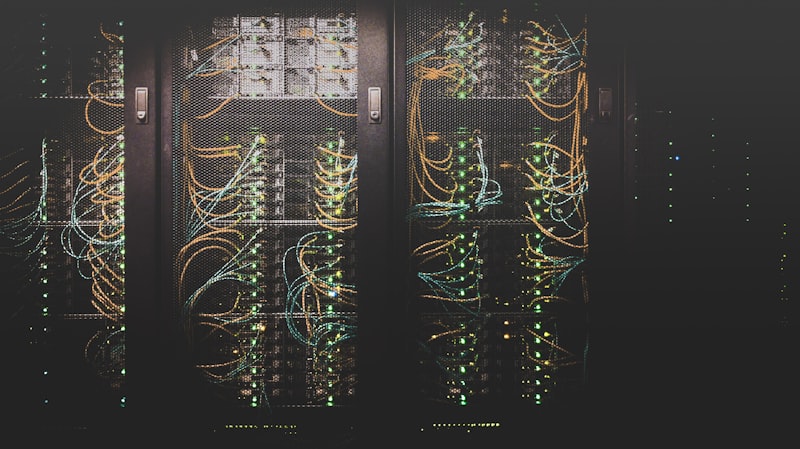

By stripping away the heavy computation overhead of unspecialized agents, we achieve a frictionless environment where scaling your AI operations adds pennies — not hundreds of dollars — to your monthly spend. Our custom deployments secure your inference pipelines against erratic latency spikes, guaranteeing rock-solid availability.